Multimodal time-series model for activity recognition and yield estimation

Developed and deployed multimodal time-series CNN-LSTM model to analyze 3000+ hours of sensor data to recognize worker activity and productivity followed by large scale data pipeline to process 100+ million data points to estimate strawberry yield and generate yield maps at commercial scale. Details: (Bhattarai et al., 2025) and (Bhattarai et al., 2025).

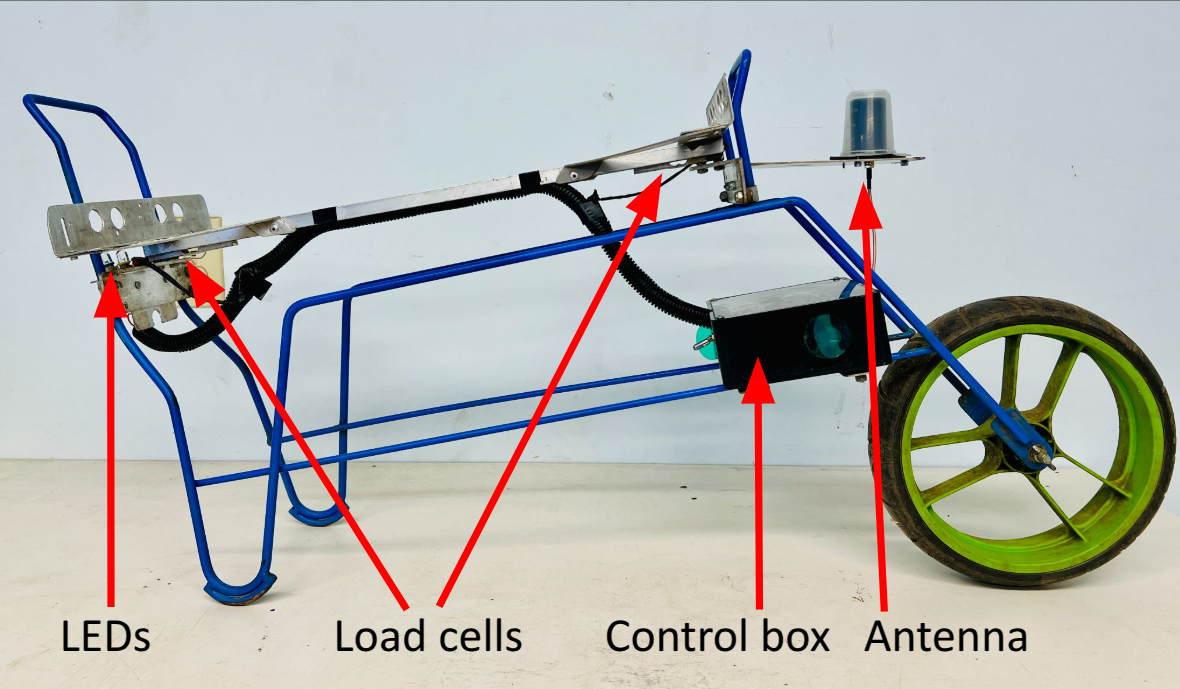

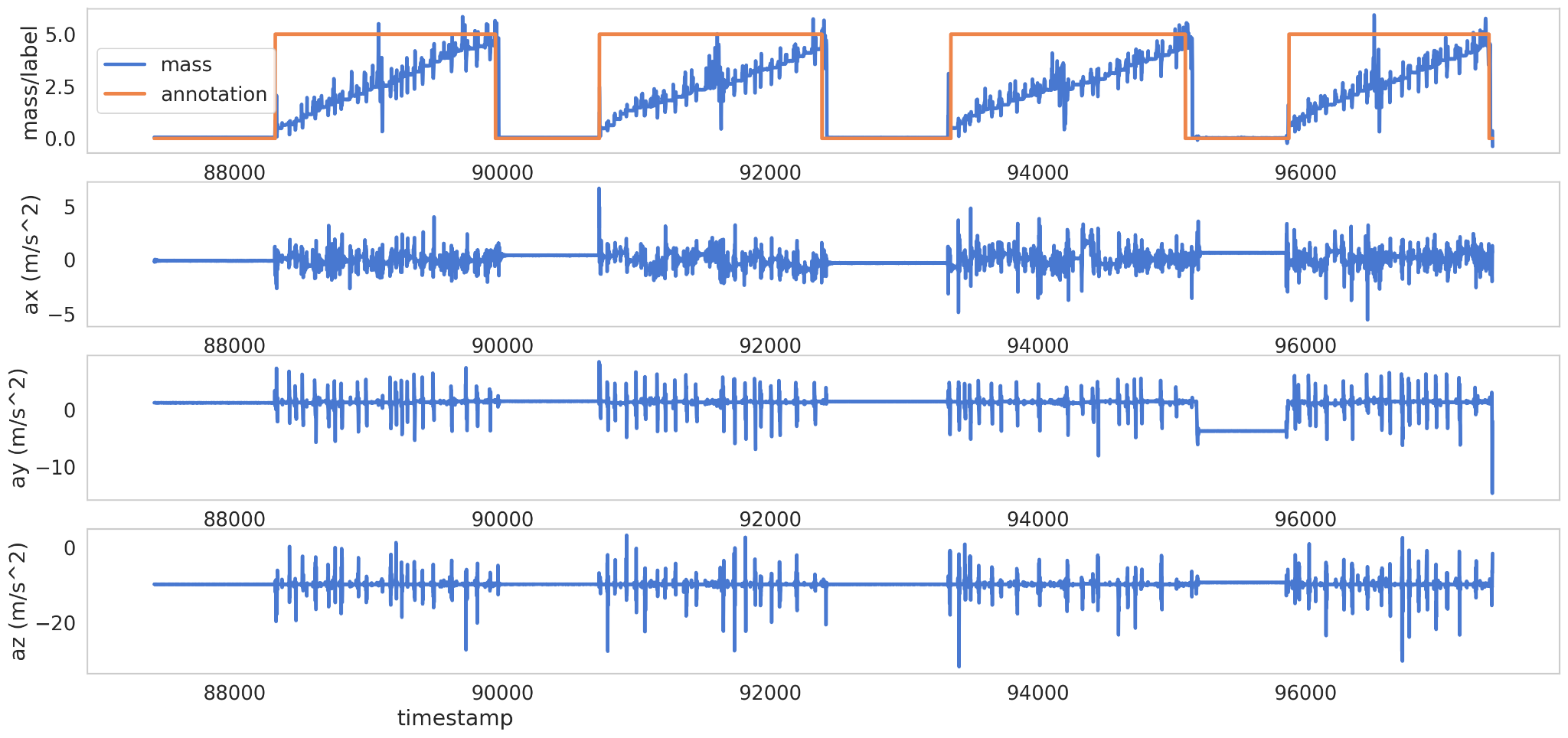

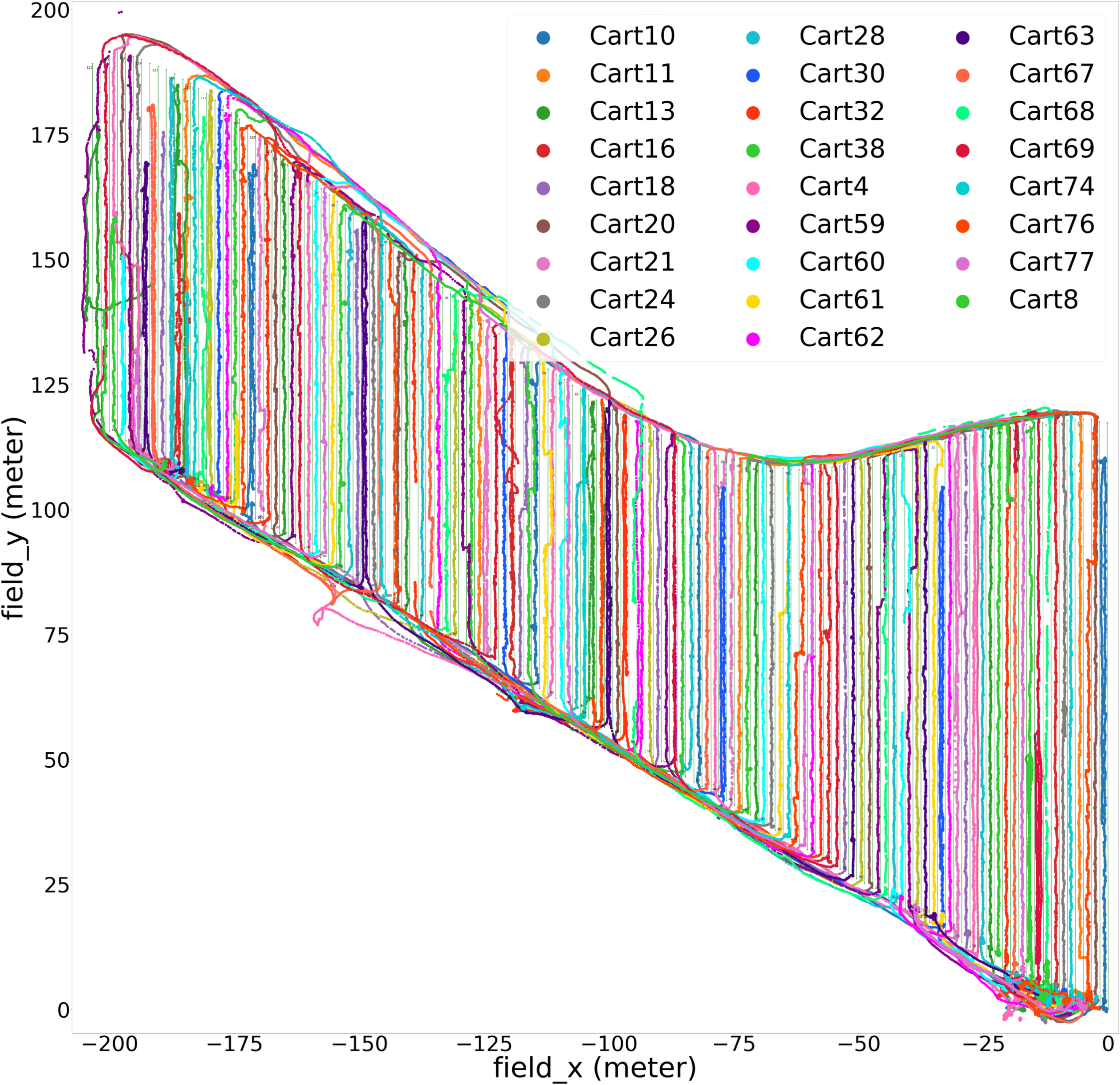

Deployed over 60 sensor-integrated picking carts across 17,862 m² of commercial strawberry field during a multi-month harvest season (April–October). Each cart was equipped with GPS, 3D accelerometers, and load cells, continuously collecting data at 10 Hz for 8–10 hours per harvest day, twice a week, resulting in terabytes of multimodal data. This data was streamed to a cloud-based server and processed using a robust pipeline. One of the major design decision was to make the system low cost, scalable, and practical for commercial use. Instead of high-cost RTK-GPS units, we adopted freely available Satellite-Based Augmentation System (SBAS) based GPS modules with a rated circular error probable (CEP) of 0.75 meters.

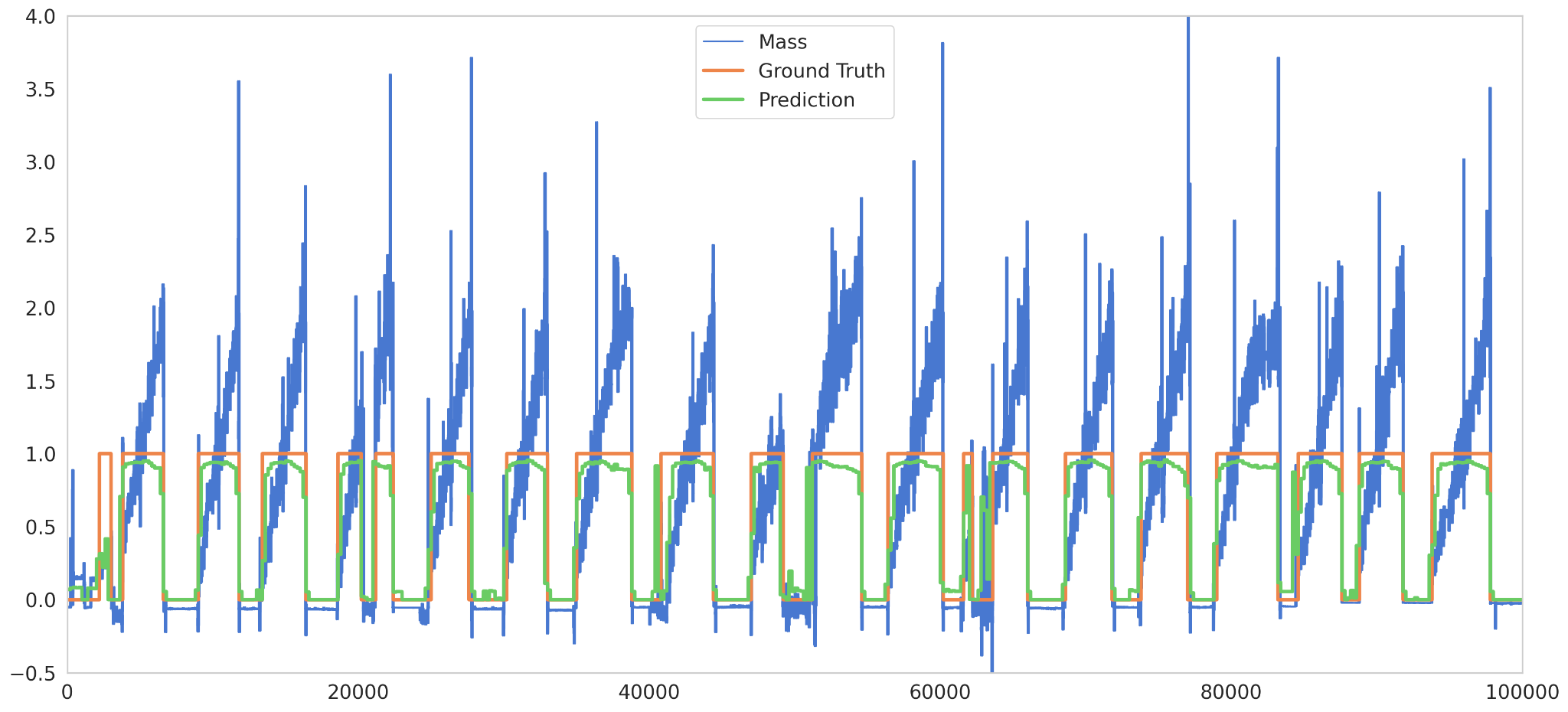

The developed multimodal time-series CNN-LSTM model achieved an accuracy of over 97% in recognizing worker activity. The deep learning model was integrated with unsupervised algorithms to develop a large-scale data pipeline to estimate yield and generate high-fidelity yield maps. Mathematical formulations were developed to model typical worker patterns and were used to correct errors caused by the manual nature of harvesting, sensor limitations, and variation due to how pickers handled the sensing modules.

The accuracy of the estimated yield was better than 94% with strong correlation (Pearson r = 0.99) with the grounf truth data.

SKILLS: Python, TensorFlow, MLflow, AWS, Docker, Recurrent Neural Network (RNN), Long Short Term Memory (LSTM), NumPy, Pandas, Scikit-learn, QGIS, Raspberry Pi

References

2025

- arXivarXiv preprint arXiv:2503.22809, 2025

- arXivarXiv preprint arXiv:2504.02846, 2025